Compass AI Assistant

After the initial launch of MongoDB’s Compass AI assistant, I led foundational research to understand what developers needed beyond a chatbot-style experience.

These insights informed feature prioritization for the next release and shaped the long-term vision for a more context-aware, agentic assistant.

MongoDB AI Assistant in Compass

Company

MongoDB

Timeline

Sep 2025 - Dec 2025

Role

Lead UX Researcher

Team

Product Manager, Design Lead, Staff Product Designer, 2 Product Designers

Participants

Developers, Engineering Managers, CTOs, Technical Founders, AI Engineers

Methods

Moderated interviews, live product walkthrough, concept testing

The Problem

The team noticed a plateau in Compass usage. And with AI assistants becoming an expectation in developer tools, they suspected users were turning to external tools with built-in assistants like Copilot and Claude Code to support their MongoDB workflows. Without an AI assistant, Compass risked falling behind.

But these tools often led to a frustrating experience: users had to switch between windows, rely on trial and error, and work with generic answers that weren’t tailored to their MongoDB data.

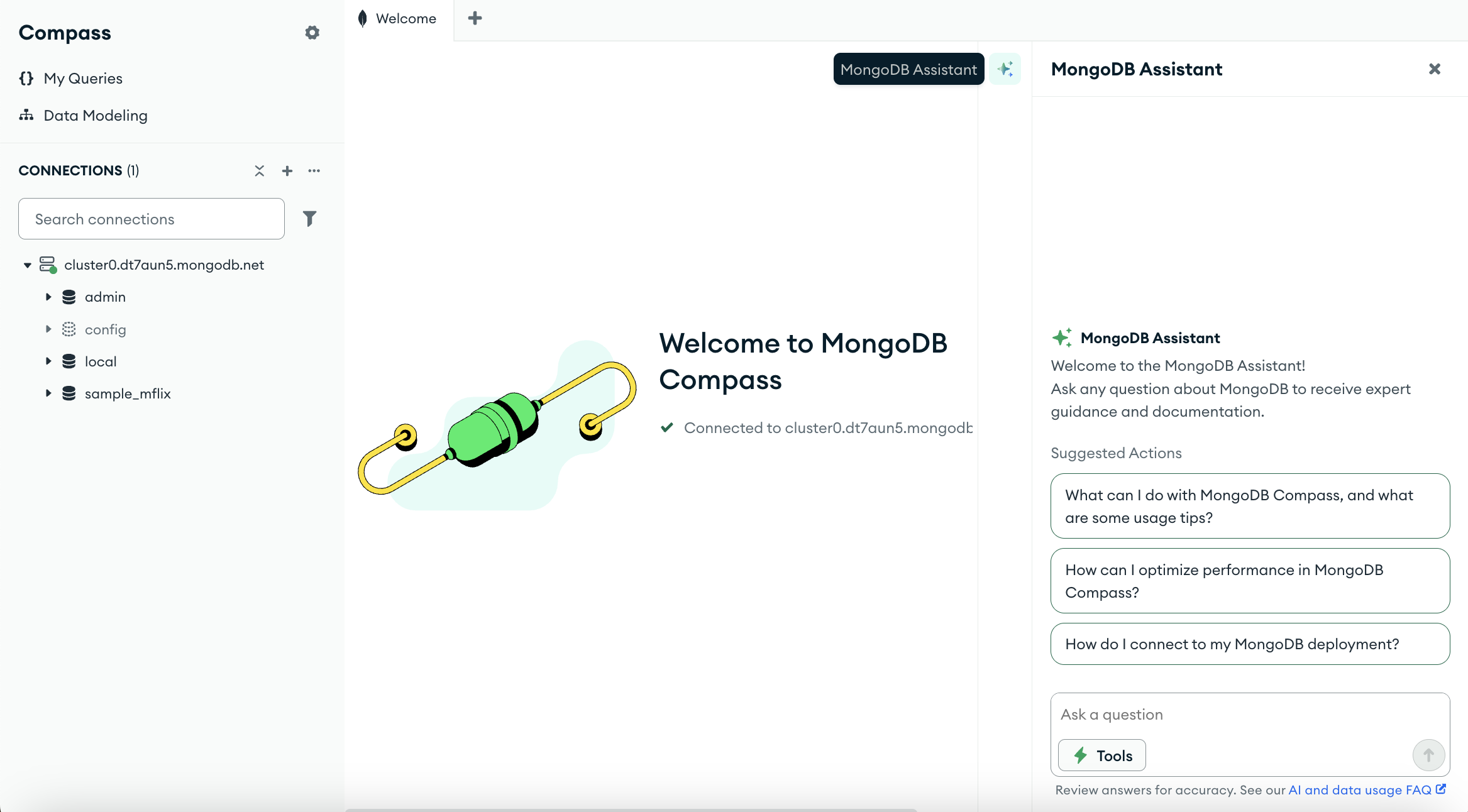

In response, MongoDB launched its own AI assistant directly in Compass. The first version was focused on answering user questions. Once it was live, the team needed a deeper understanding of what developers needed from an in-product assistant and where the experience should go next.

My Role

I led research to guide feature prioritization and shape the AI assistant’s role within Compass.

At the same time, other teams across MongoDB were also exploring assistant integrations in different products. I initiated coordination with designers running related research to align scope, ensure consistency across experiences, and avoid duplicating work.

My research focused on understanding trust, adoption, and user expectations, while the designers I coordinated with explored copy, branding, and visual patterns in parallel. Together, our studies informed the assistant’s next release and shape MongoDB's AI assistant strategy across multiple teams.

What We Needed to Learn

I set out to understand the role of an in-product assistant within developers’ workflows and what they would expect it to do beyond answering questions.

This led to three key questions:

Workflow Integration

Where should an AI assistant fit into developers' workflows, and should it support or replace existing Compass tools?

AI-Assisted Actions

What should the assistant enable developers to do beyond answering questions, like automating tasks or suggesting next steps?

Trust & Adoption

How do developers compare an in-product assistant to external AI tools, and what builds or breaks their trust?

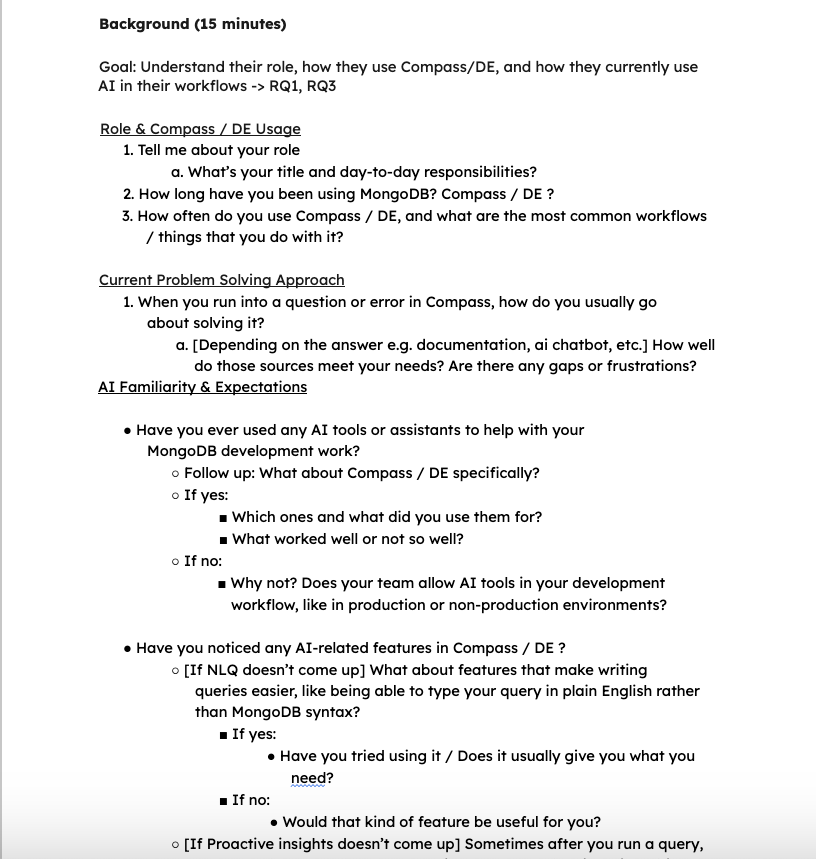

I designed the study to focus on where the assistant should go next, rather than evaluating the usability of the current experience.

To do this, I combined interviews, live product walkthrough, and concept testing, moving from how developers think about AI, to how they use it in their work today, to what they’d want it to do next.

Each session covered three phases:

How I Framed the Research

mental models → current reality → future possibilities

Session Structure

-

Part 1 - Mental Models

I started with semi-structured interviews before showing the product or prototype to understand how developers think about AI tools, their workflows and pain points in Compass, and what builds their trust in an assistant.

Starting without product exposure helped avoid priming, so I could see how they naturally think about AI.

-

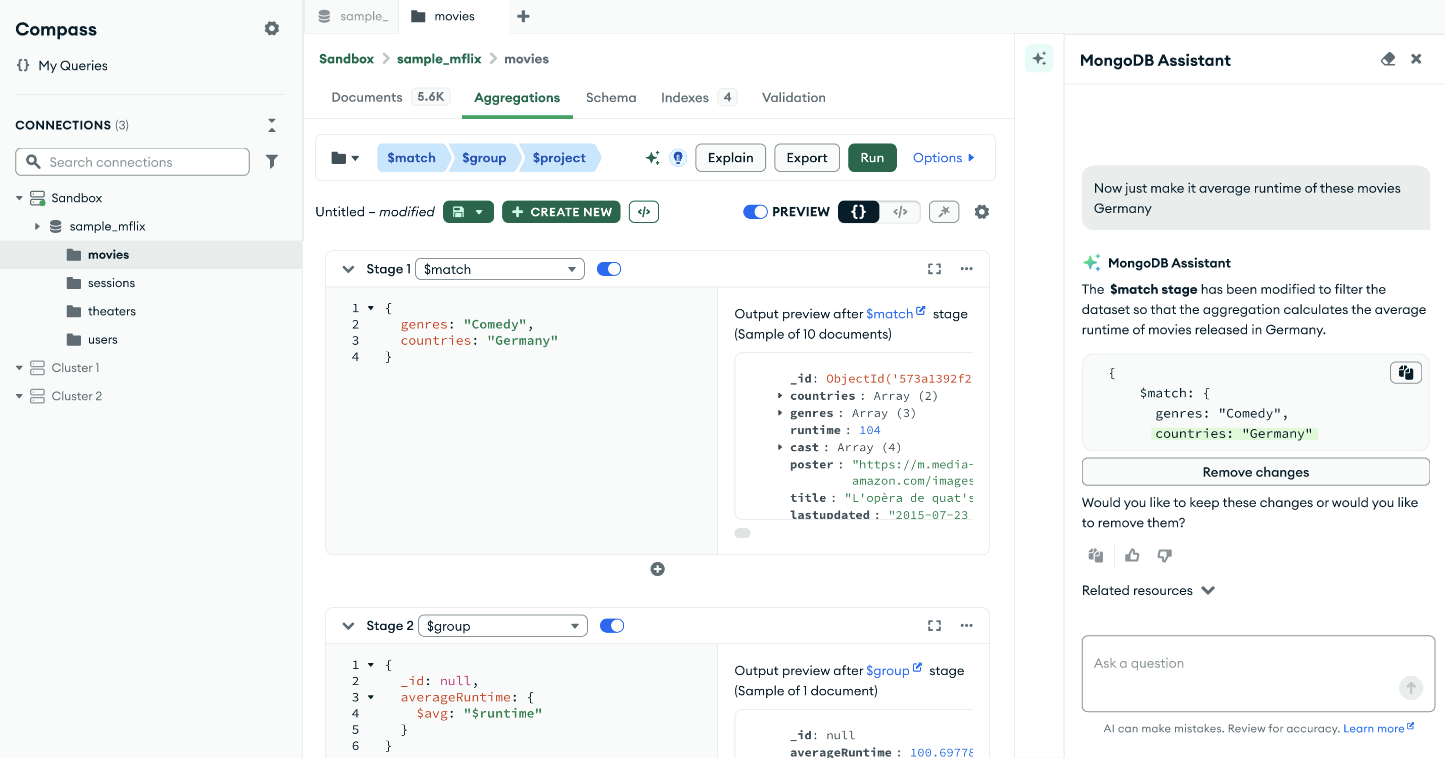

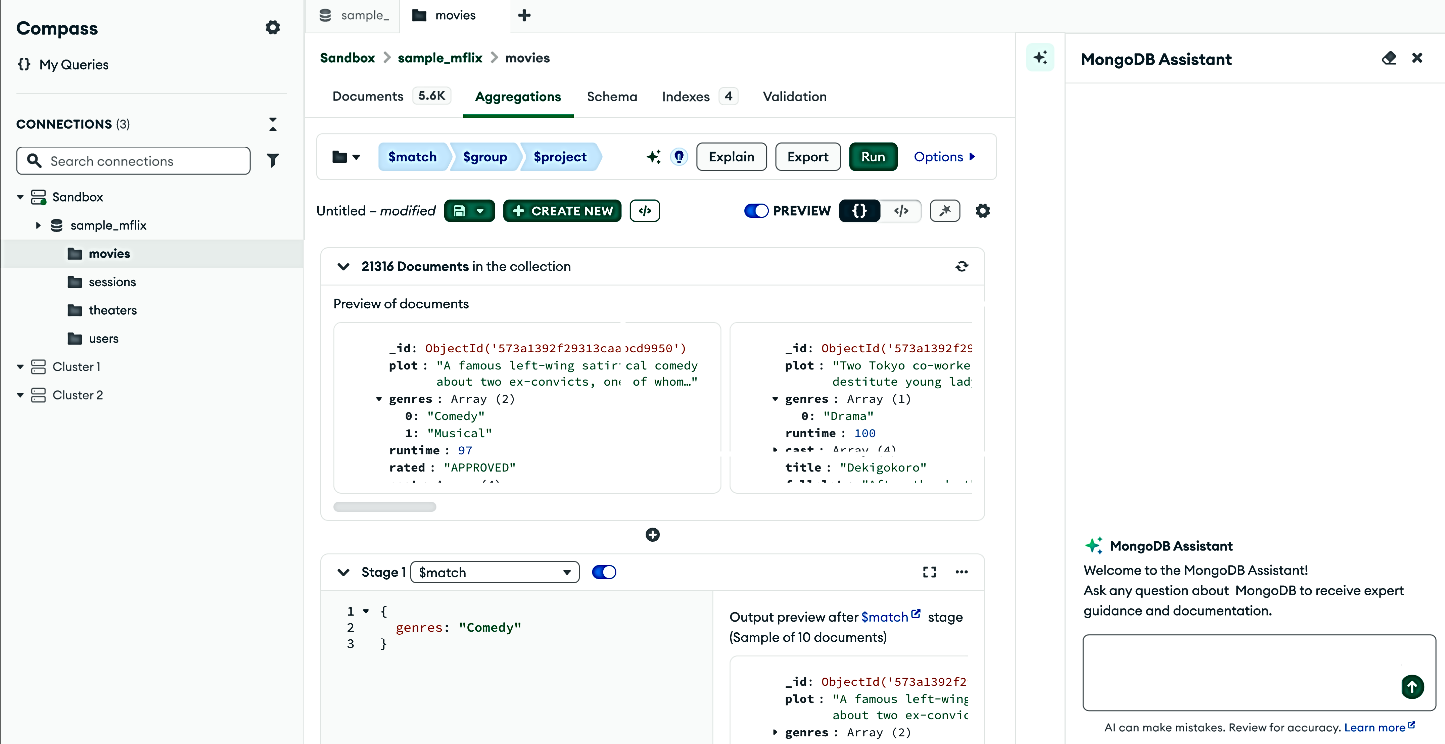

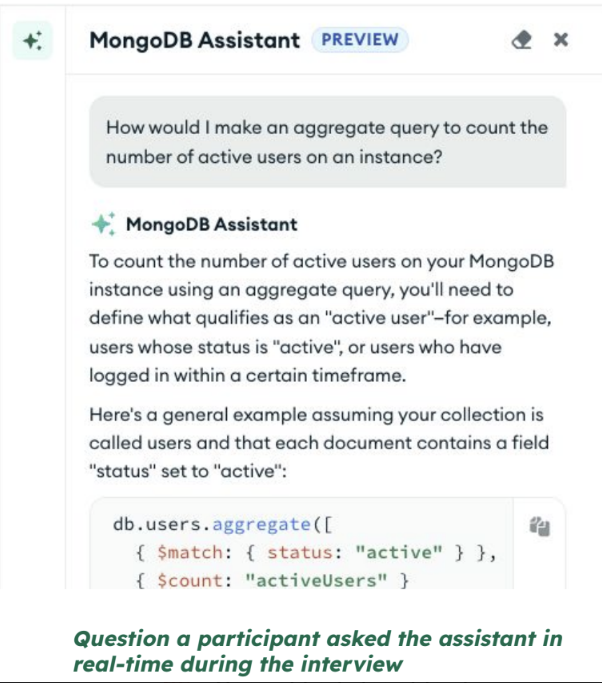

Part 2 - Current Reality

Next, I asked participants to open the current assistant in Compass and ask it a real question from their everyday work. As they interacted with it, they thought aloud about whether the response was useful and why.

Using the actual product allowed me to see real-time reactions rather than hypothetical ones.

-

Part 3 - Future Possibilities

After using the current assistant, participants interacted with prototypes showing potential future features, like the assistant automating aggregation pipelines.

This let them compare what exists today with what could be possible, giving us feedback on automation, confirmations, and ways to revert automated actions.

Key Insights & Product Decisions

Visibility impacts adoption

Most participants had never noticed the assistant before the session. Even users who were aware of it weren’t sure what it could do or when to use it.

“I didn’t know there was an AI tool. I never saw anything prompting me to use it.” — Participant 7

I recommended showing the assistant at the right moments in the user’s workflow and making it clear what it can help with, so it’s easier to discover.

What shipped

Added new entry points to the roadmap to introduce the assistant in context. Introduced suggested prompts and clearer descriptions of what the assistant can do, making it easier to understand and get started. Some of these changes are now live in production.

Guided prompts and descriptions now live in production, making it easier for users to find and understand the assistant.

Context awareness is a key differentiator

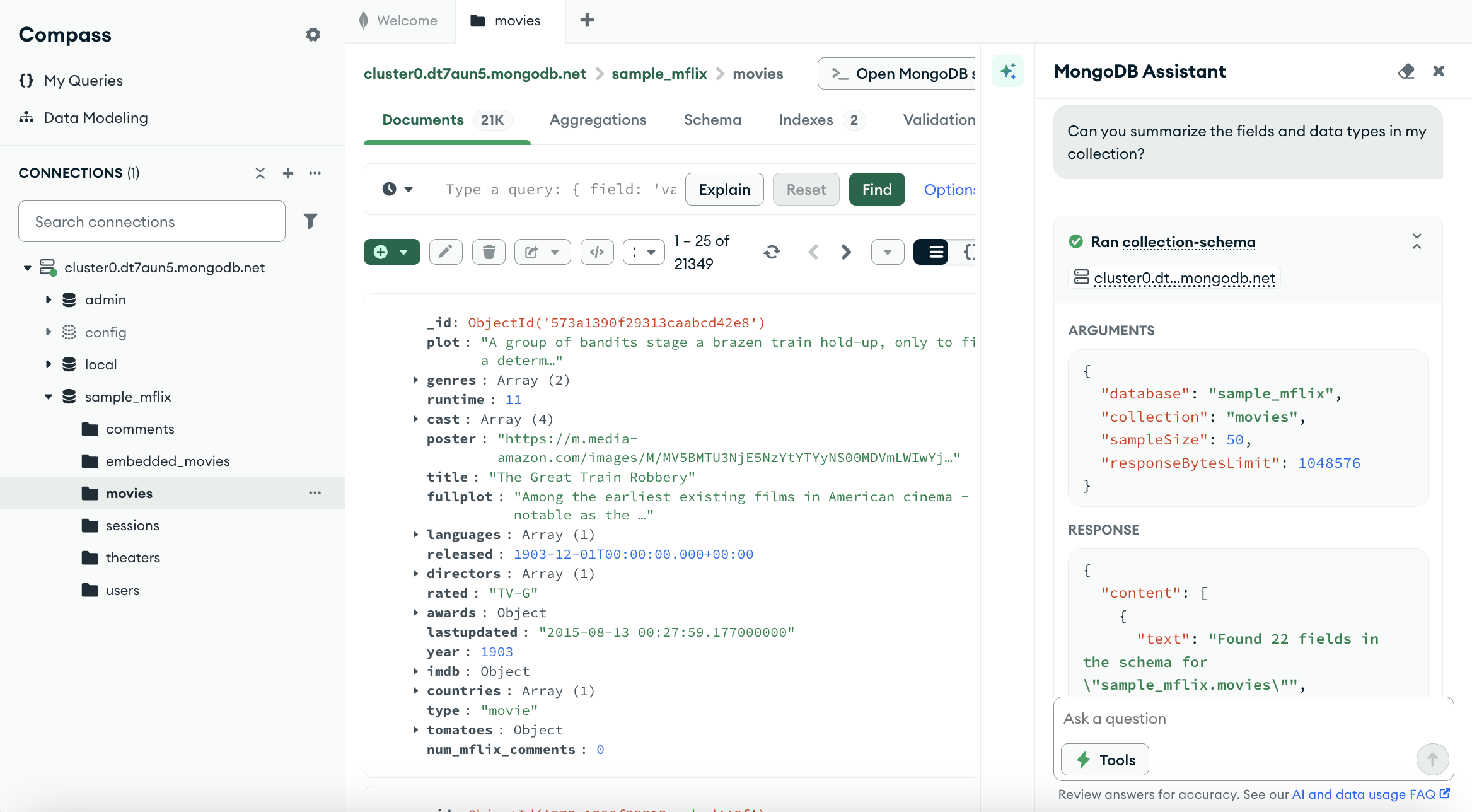

All participants expected the assistant to understand what they were working on and their data, like the active tab, collection, or schema, without having to explain it. Instead, responses felt generic, making the assistant feel no different from other AI tools.

“It’s a generic answer that doesn’t have context. Compass should be able to figure out what fields I have… it would help to have context like collection or schema” — Participant 3

They also expected chat history to be saved so they wouldn’t have to repeat context, and more proactive support like identifying performance issues or suggesting improvements.

I recommended having the assistant use the user’s context so it can respond to what they are working on, making it more valuable than alternatives.

What shipped

Context aware responses were prioritized and are now live in production. The assistant now uses the user’s current database and collection to generate more relevant responses, help interpret results, debug issues, and suggest improvements. Chat history persistence was also added to the roadmap.

Context-aware responses now live in production, using the active collection to provide more relevant answers and support data exploration.

Trust requires transparency

Users weren’t sure what the assistant could access or whether any data was sent outside of MongoDB, which made them hesitant to use it on sensitive or production data.

What shipped

Prioritized clearer communication about what the assistant can access and how data is handled, making this information visible upfront to address trust concerns.

“So this MongoDB assistant, do we get visibility into whether data is traveling outside Mongo… or stays in our cluster? This is really important, especially when we’re dealing with personal data.”

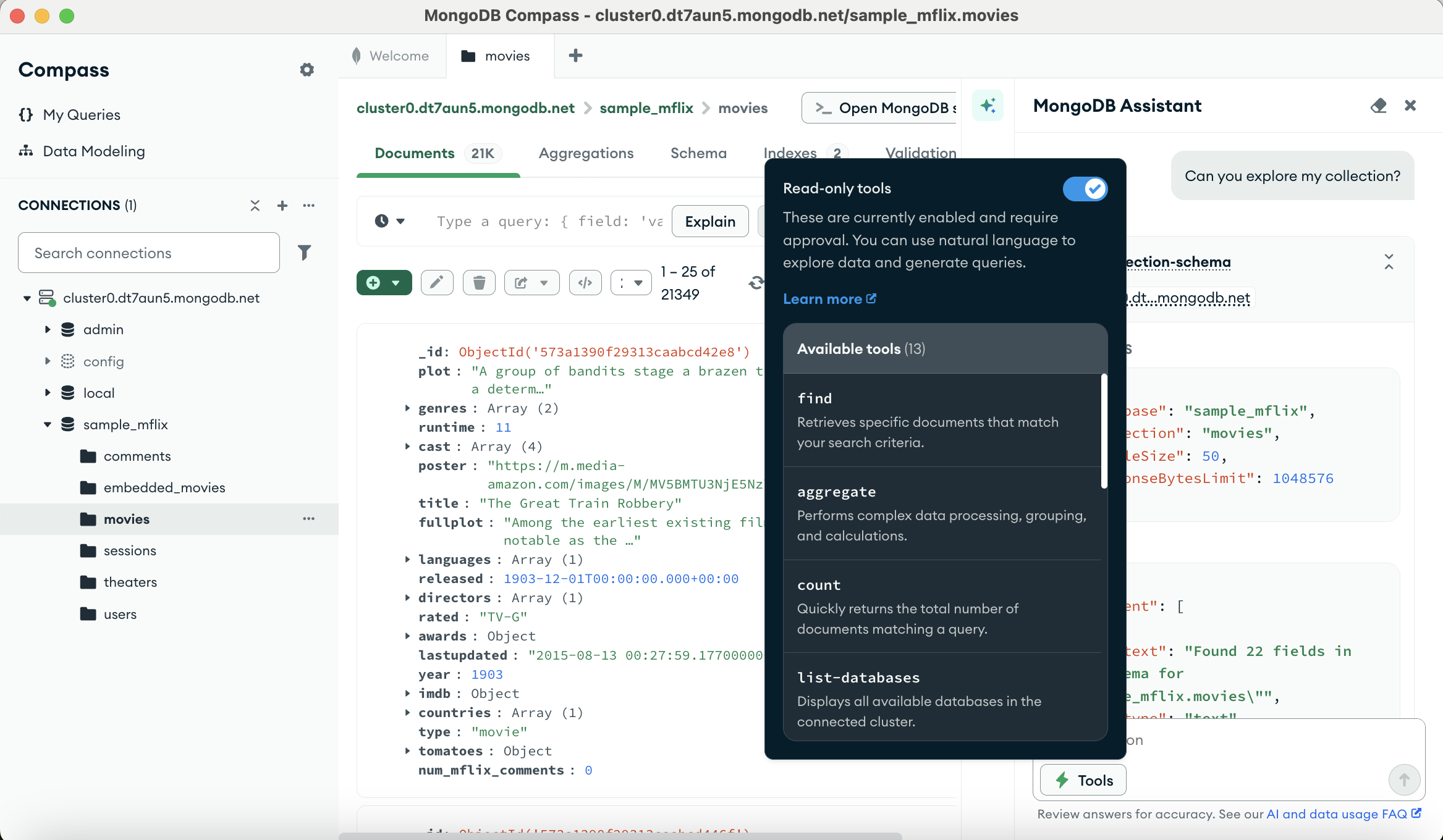

Safe AI actions need user control

Users were open to AI-assisted actions, but only if they were in control. They wanted to review, confirm, and undo changes, especially for anything impacting production data. Mistakes can break trust and lead users to stop using the feature.

“I like to be able to not just have an AI going rogue on my code. I like to see it and review it before I just paste it somewhere or make some modifications.” — Participant 6

I recommended designing AI actions with clear boundaries: low-risk actions can be run easily, while high-risk changes need review and approval.

What shipped

The assistant requires user approval before running actions on a user’s data, and this is live in production. We also defined a design principle to prioritize user control and data safety over automation.

Read-only AI-assisted actions are now live in production, requiring user approval before running.

Impact

-

Shape what shipped

This research shaped what shipped in the Compass AI assistant release including context-aware responses and suggested prompts. It also informed roadmap priorities like entry points and chat history.

-

Established design principle

In collaboration with the designers, this work defined a design principle for AI-assisted actions, emphasizing user control and approval for actions impacting their data.

-

Informed roadmap across MongoDB

This research helped shape MongoDB’s broader assistant strategy and supported multiple teams building assistant experiences across products.